In our previous article, we explored the historical evolution of Object-Oriented Programming and the limits it hits under concurrency. Today, we'll focus on the concrete failures that appear when several threads touch the same mutable data—problems that have plagued developers for decades.

Series Navigation: Part 1 | Current article: Pitfalls of Shared State | Part 3

The Fundamental Problem: Shared Mutable State

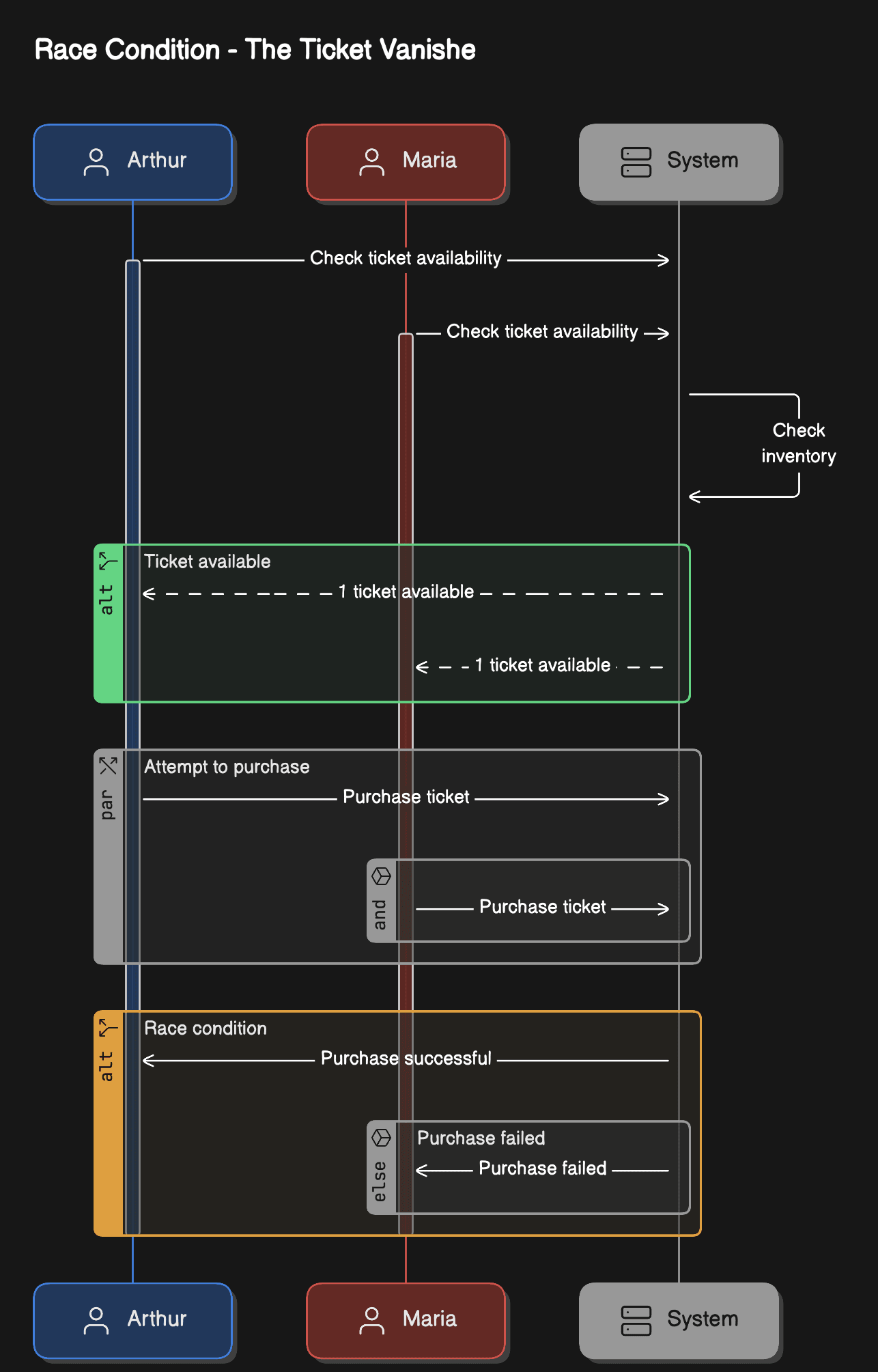

Imagine Arthur and Maria both trying to purchase the last available ticket for a concert through a web application. Both click "Buy Now" at exactly the same time. What happens next illustrates the core challenges of concurrent programming:

Without proper synchronization, both Arthur and Maria might see availableTickets > 0, leading to both "successfully" purchasing the same ticket.

The Four Horsemen of Concurrent Programming

When multiple threads interact with shared state, four primary problems can arise, each more insidious than the last.

Understanding Each Concurrency Failure Mode

Let's examine how these problems manifest in real systems:

State diagram showing the four primary concurrency failure modes and how they lead to system problems. The Actor Model eliminates these issues through message passing and sequential processing within actors.

1. Race Conditions

Race conditions occur when the outcome of a program depends on the timing and interleaving of threads. The ticket example above is a classic race condition.

The result? The system thinks it sold two tickets when only one was available, leading to data corruption and unhappy customers.

This sequence diagram visualizes the exact timing issue in the race condition. Both Arthur and Maria check availability before either decrements, resulting in both "successfully" purchasing the same ticket.

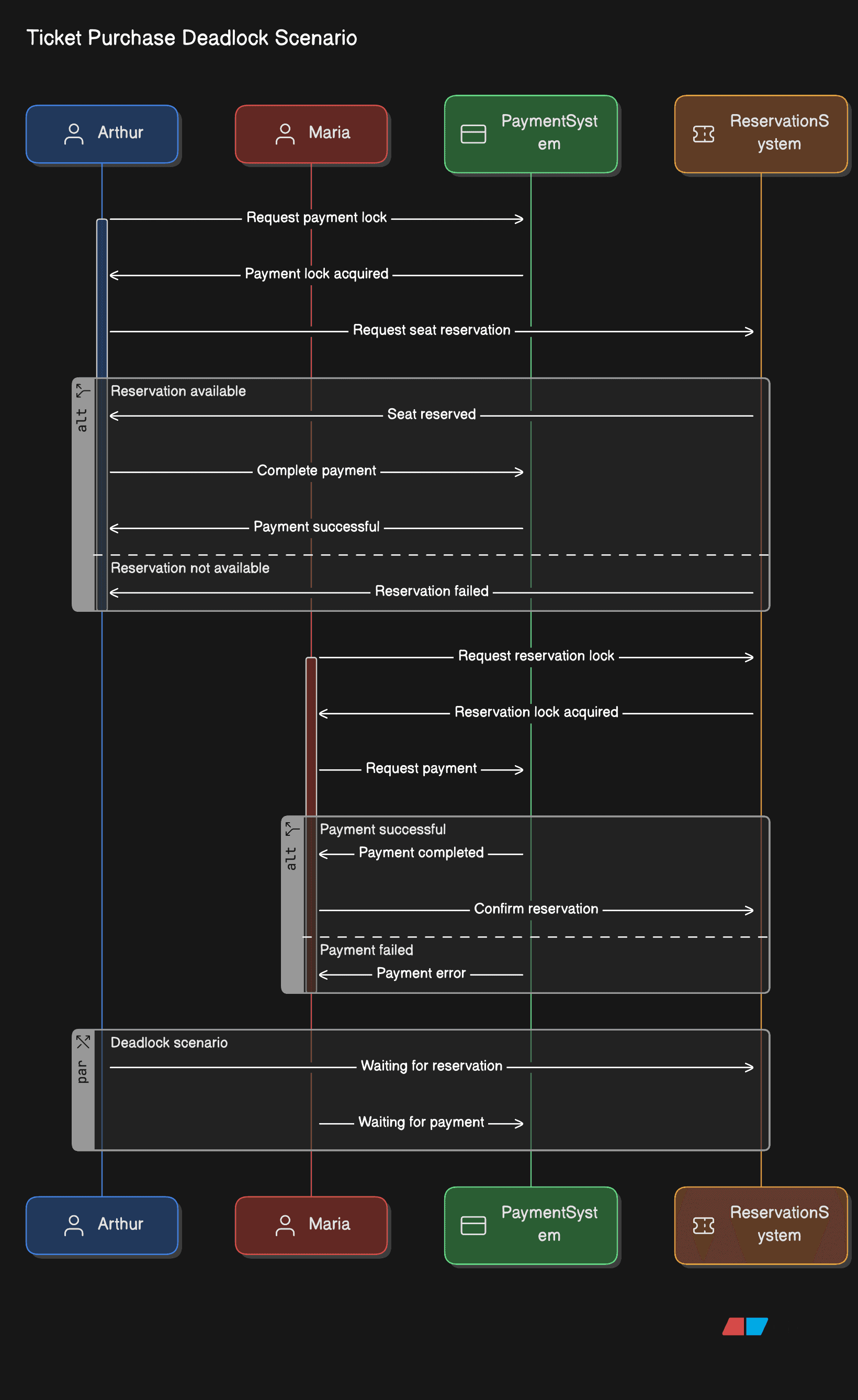

2. Deadlocks

Deadlocks happen when two or more threads are blocked forever, waiting for each other to release resources:

If Thread 1 acquires lock1 while Thread 2 acquires lock2, they'll wait forever for each other.

This sequence diagram shows the circular dependency that creates a deadlock. Thread 1 holds lock1 and waits for lock2, while Thread 2 holds lock2 and waits for lock1. Neither can proceed.

3. Livelocks

Livelocks are similar to deadlocks, but threads aren't blocked – they're actively trying to resolve the conflict, creating an infinite loop of politeness:

The threads remain active but make no progress, like two people in a hallway both stepping left and right in sync.

Livelock flow: threads continuously detect conflicts and yield to each other, but both make the same decision simultaneously, resulting in an infinite loop of mutual yielding without progress.

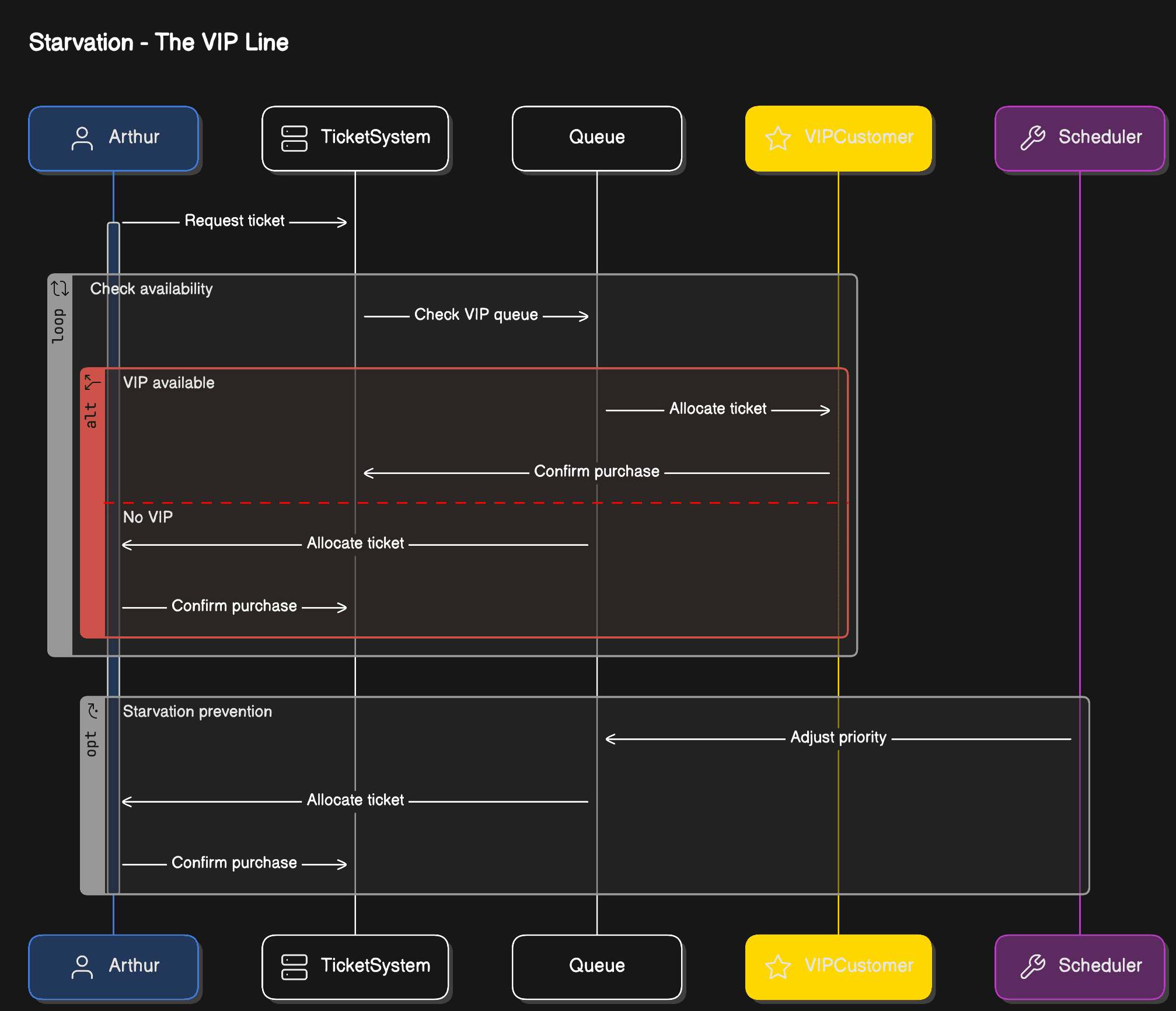

4. Starvation

Starvation occurs when a thread is perpetually denied access to resources it needs:

Low-priority threads might wait indefinitely while high-priority threads continuously grab resources.

Traditional Solutions and Their Limitations

Java provides several mechanisms to handle these issues, but each comes with trade-offs.

Built-in Synchronization Approaches

Let's evaluate the common strategies developers use to protect shared state:

Synchronized Keywords

Pros: Simple, prevents race conditions Cons: Poor scalability, potential for deadlocks

Volatile Fields

Pros: Ensures visibility of changes across threads Cons: Only works for single operations, doesn't prevent race conditions in complex operations

Atomic Classes

Pros: Better performance than synchronized Cons: Limited to simple operations, doesn't solve complex coordination

The Caching Conundrum

Modern applications often add caching layers for performance, which introduces additional complexity.

Distributed Caching and Consistency Problems

When caching is introduced across multiple instances, new coordination challenges emerge:

Now we have to worry about cache invalidation across multiple instances, consistency between cache and database, and distributed locking in clustered environments.

Why OOP Struggles with Concurrency

Object-Oriented Programming's core principle of encapsulation assumes that objects can protect their internal state. But when multiple threads access the same object, this protection breaks down.

The Fundamental Mismatch

These are the core reasons OOP's encapsulation model fails at scale:

- Encapsulation isn't enough: Private fields don't protect against concurrent access

- Method-level synchronization is too coarse: It creates unnecessary bottlenecks

- Complex object graphs require complex locking: Leading to deadlock risks

- Inheritance complicates thread safety: Subclasses might break parent class assumptions

The Mental Model Problem

Perhaps the biggest challenge is that shared mutable state requires developers to think about all possible interleavings of thread execution. This quickly becomes mentally overwhelming:

- With 2 threads and 3 operations each, there are 20 possible execution orders

- With 3 threads and 4 operations each, there are 369,600 possible execution orders

- With realistic applications having hundreds of threads... the complexity explodes

A Different Path Forward

An actor-based model addresses these problems by removing shared state entirely. Instead of multiple threads accessing the same data:

How Actors Solve the Shared State Problem

By shifting from shared state to message passing, the Actor Model provides:

Key Architectural Benefits

Each actor owns its state completely, and communication happens only through messages. This eliminates locks, race conditions, and deadlocks entirely, while providing natural fault isolation and supervision.

Consider how our ticket service might look with actors:

No synchronization keywords, no locks, no race conditions – just simple, sequential processing of messages.

Looking Ahead

The problems we've explored today – race conditions, deadlocks, livelocks, and starvation – have plagued concurrent programming for decades. Traditional OOP solutions, while functional, often create more complexity than they solve.

In our next article, we'll explore how the Actor Model provides elegant solutions to these challenges and dive into practical implementation patterns using Akka and Apache Pekko on the JVM.

The journey from shared state to message-passing architectures isn't just about avoiding bugs – it's about building systems that are more scalable, maintainable, and resilient to failure.

Continue Your Actor Model Journey

Ready to see how these problems are solved? Part 3 walks through the complete implementation, covering supervision strategies, testing patterns, and real-world lessons learned building Actor systems in production.

Series Navigation: Part 1 | You are here | Part 3

Further Reading

- Hewitt, C., Bishop, P. & Steiger, R. (1973). "A Universal Modular ACTOR Formalism for Artificial Intelligence" — IJCAI. The original Actor Model paper

- Dijkstra, E.W. (1965). "Solution of a Problem in Concurrent Programming Control" — CACM 8(9). The foundational shared state / mutual exclusion paper

- Lamport, L. (1978). "Time, Clocks, and the Ordering of Events in a Distributed System" — CACM 21(7). Foundational work on message ordering in distributed systems

- Goetz, B. et al. (2006). Java Concurrency in Practice — Addison-Wesley. The definitive guide to the concurrency pitfalls discussed in this article